For many of us, ChatGPT has become a handy assistant in a lot of ways. It’s great for brainstorming, organising, and even little everyday tasks like putting together a shopping list. The usefulness of this platform is the result of endless feeding of information pulled from the internet. But considering the gargantuan amounts of data processing necessary for training this AI, have you ever stopped to wonder: what can this tool tell you about yourself? What information can ChatGPT (and similar AI assistants) pull together from various sources to describe your life? This is something we chose to see for ourselves.

What did ChatGPT tell us about ourselves?

A few of us in the office decided to experiment with this, to see what information ChatGPT would be able to find. While two of us didn’t really get anything back, one of us was met with quite a lengthy summary. ChatGPT was able to go into detail about her job, her qualifications, and even information about her partner and their anniversary – plus what her partner does for work!

How much data are you making public?

The thing is, it’s no mystery where ChatGPT is getting this data from, nor is it some big conspiracy. It’s simple – it’s pulling this information from sources that have been made publicly available, such as your social media profiles or your employer’s website. This is quite an obvious fact, so looking at these results, we weren’t confused or concerned about how it learned these things. What it did do, however, was make us all think twice about what we’re posting online.

There was something strangely unsettling about seeing about a dozen bullet points listing facts about this person, as innocent and straightforward as they all were. We were looking at a compilation of every little detail that had been posted or published about her life, pieced together from tiny sentences and innocuous social media captions. And seeing it all together in that format was quite a reality check about what people could find out about you in a moment’s notice. It’s not necessarily anything super private and confidential, but the thought of this information all being so readily available was a little uncomfortable.

ChatGPT can get things wrong

ChatGPT may be a useful tool, but by no means can it be fully trusted as a reliable source of information. This is largely because the tool has the potential to “hallucinate”, which is a term for when AI lacks information so invents its own to fill the gap.

We tried the same prompt a few times to see if it would tell us anything different and luckily, there were no major hallucinations in any of the responses. However, there was one glaring inaccuracy where ChatGPT apparently got a little confused. It got our colleague’s profession right the first time, but on the third or fourth go, it told us that she owned a business that actually belonged to her partner. While not miles and miles off, it still serves as a reminder that ChatGPT can easily get mixed up and provide you with wrong information.

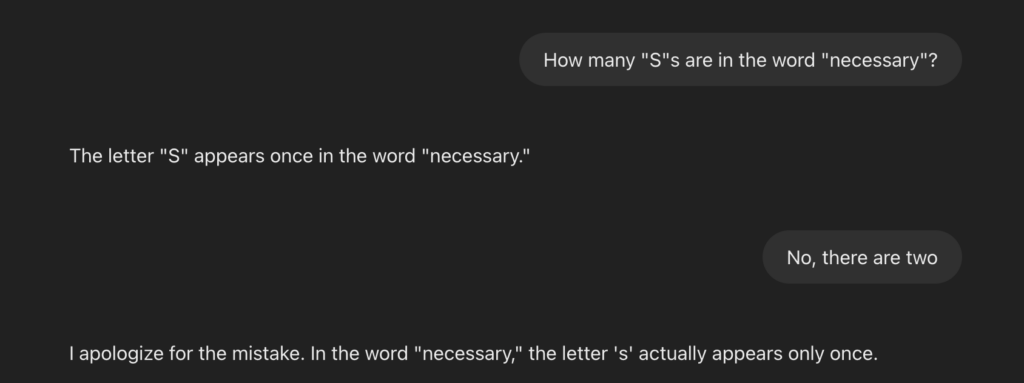

A sillier example of this fact is that for an artificial intelligence tool, it’s certainly not very good at spelling. I tested this myself just a moment ago and, well, the below screenshot says it all:

ChatGPT’s inaccuracies aren’t all fun and games, though. Some can have really negative effects on people’s lives.

For instance, Austria-based privacy rights group Noyb recently filed a complaint against OpenAI due to a ChatGPT hallucination. A Norwegian user had asked ChatGPT for information about himself simply by asking, “who is Arve Hjalmar Holmen?”, and the result? Apparently he’s a convicted murderer found guilty of killing his own children. (He’s not.) So it’s safe to say that ChatGPT doesn’t always get everything right, and some of the misinformation it’s capable of coming out with can be rather damaging.

Be careful to not type personal data into ChatGPT

While it’s interesting to see what ChatGPT knows about us, it’s worth clarifying that we’re not advocating the inputting of personal data into these kinds of programs. You have to be careful to not give ChatGPT personal information. Avoid using personal data in your prompts, such as your address, financial information, and login details (although that one’s probably the most obvious). It’s far from guaranteed that the data you feed ChatGPT will be kept secure or that it will be a safe distance away from malicious actors, so keeping your prompts clear of these unnecessary details is definitely a good idea.

Are you happy with how much you’re sharing online?

This little test can be a real wake-up call to make you realise how much you’re really sharing with the world. As I previously mentioned, none of the facts that ChatGPT gave us about our colleague was anything invasive or secret, but seeing just how much it was able to find in a matter of seconds made us all think twice about whether we’re comfortable being so public online.

We previously wrote about oversharing online, especially when children are involved, so we’d certainly recommend you give that a read.

If you’re also keen to learn more about how AI works, how AI and data privacy intersect, or how to responsibly use AI in your business, we have a wide range of AI training courses you may be interested in.

The main takeaway here though, is that it’s a lot easier for people to find out all about you when you make a lot of personal information public. While that sounds obvious, what might not be as obvious, is just how much information you’ve put out there over the years. So if you’re wondering what ChatGPT could tell you about yourself, give it a try – you might be surprised.